There are many aspects of IOT/connected devices that appeal to me. I like looking at data, it’s interesting. I like automation, it can be fun, and convenient. Unfortunately there are many aspects of the current IOT landscape that are extremely unappealing. Universally, not just to me. The obvious solution then is to build my own :)

And that’s what I’ve started doing. Over the past five years, little by little, as I’ve wanted to do something, I’ve put something together, based on what I’ve got lying around. Originally I was making use of a web-based server to store and gather all my data, but got annoyed each time the internet cut off. In hindsight it’s not clear to me why I was experiencing so many connectivity issues at the time that I decided the best option was to host something locally, but that’s where we are today.

Server

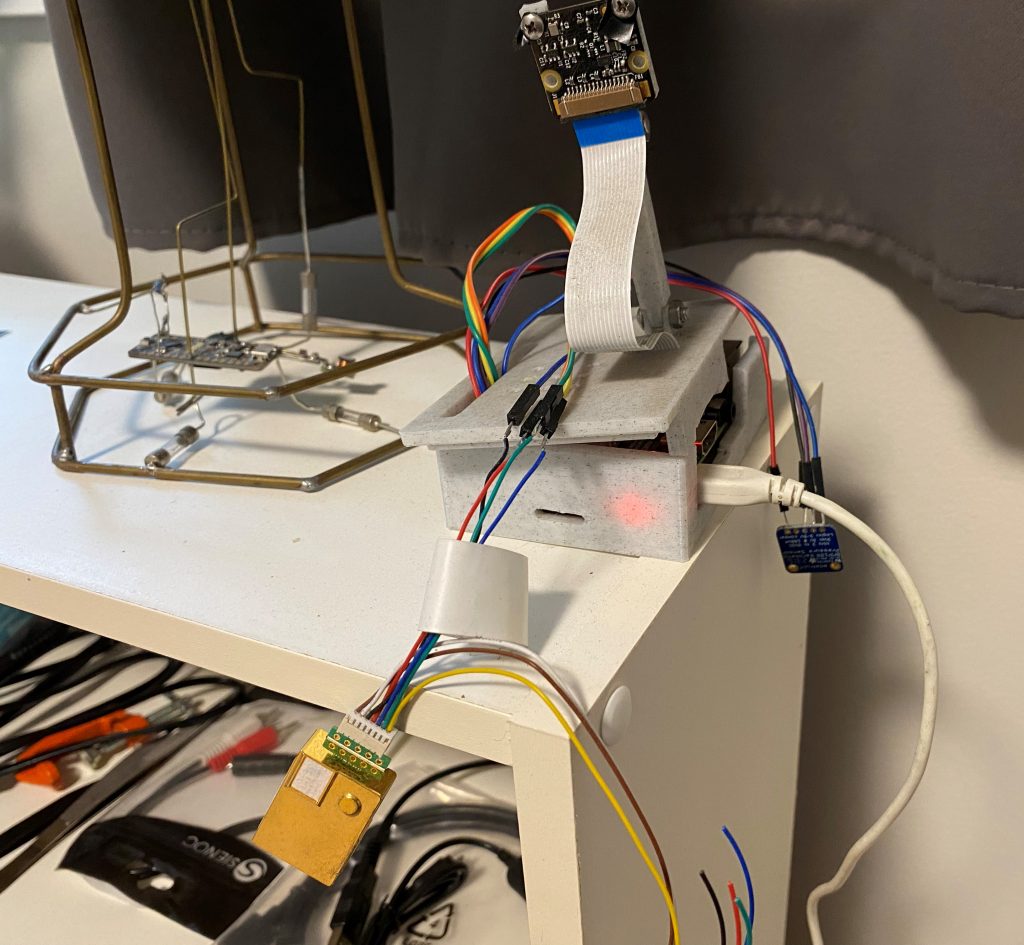

The server is a Raspberry Pi. One that I had lying around, and I already had submitting temperature and air pressure data to my server. The server was just a shared hosting site with a few basic php commands to link up with a sql database. To provide some flexility, allow for some customization and make up for my large lack of web-dev experience, I chose to do it all in python. I’m using the CherryPy library to present a basic html web-interface that gives me a summary of what’s going on, and also handles all the data requests coming in from various local sources. If the internet goes down, it doesn’t matter, because everything is stored and presented on the local network.

At times, like when I’m away from home and need to monitor some things, I can open another port and make it accessible from the internet, but this is off by default, mainly as a security improving effort.

What does it do?

As I worked on integrating the few sensors I had, I realised that this platform I was creating could do more than just save some data and show it to me. Well, more than just weather data. The platform running python made it easy to script all kinds of integrations. As I created more of these, I saw the value in having a standard task based framework on which to build all these integrations.

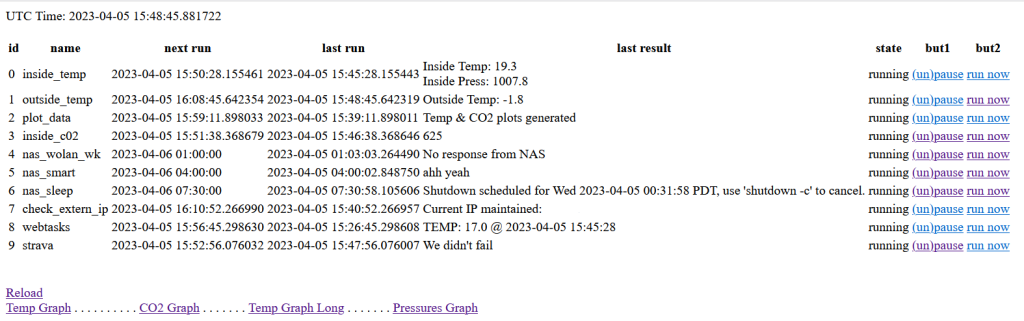

This basis of this is a config file which is read at startup, that reads what tasks need to be load and when they must run. After that, there’s nothing left to do. All the tasks run as scheduled, doing as they must. The main web-interface shows a status for each task, when it was last run, when its next scheduled run is as well as options to start and stop tasks or run them immediately.

This scheduler approach is simple. It makes it easy to expand, and it all running on python means it’s easy for me to think of something I want done and implement it, as well as feed back all the results to the ‘server’ directly.

Connected items

So what all is included in my setup?

- Raspberry Pi

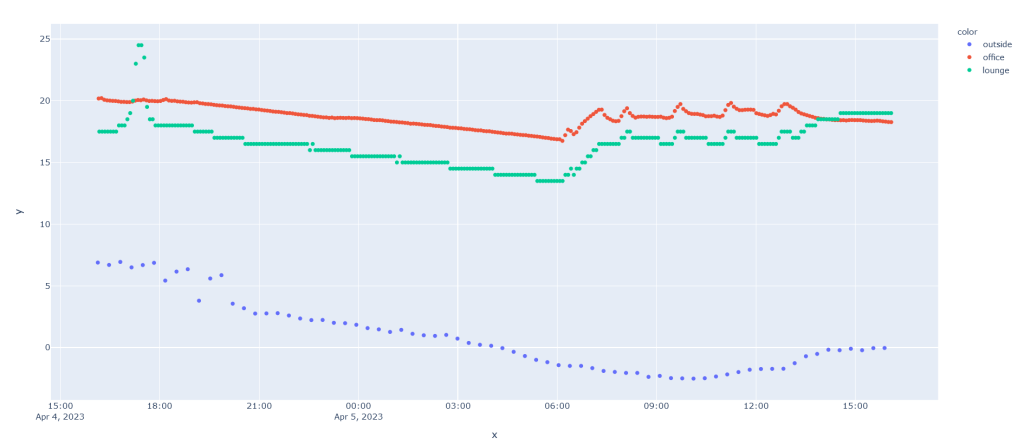

- The Raspberry Pi itself has a BMP180 and an MH-Z19 directly connected to it that it polls occasionally for the latest temperature, air pressure and CO2 levels in my office.

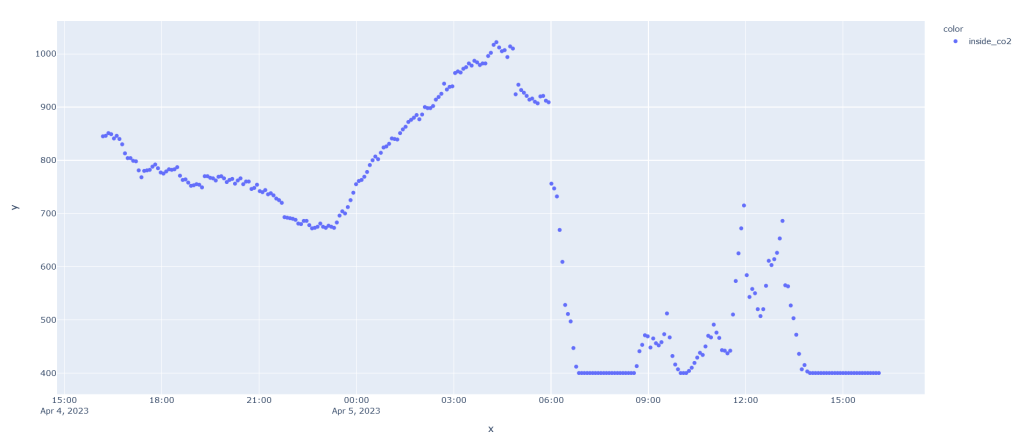

- The CO2 sensor was pretty cheap, and accuracy isn’t amazing, along with its ‘auto-calibration’. But it still gives interesting results. It’s especially easy to see build-up in my office when the door is closed, as well as the drop every time the heating runs in the winter, bringing fresh air in. It’s certainly more accurate than this.

- ESP32

- I ‘attended’ one of the Hackaday Remoticon sessions a couple years ago, where someone from DigiKey was presenting on an IOT platform (Machinechat) that they were selling. It did a lot of what I wanted, but the free tier was pretty limited, and why would I pay for something when I can do it myself for free ;) But I had the microcontroller and temperature sensor leftover, so just repurposed it to connect to my own server and feed temperatures from our downstairs.

- ESP32

- A more recent addition to the collection, and not related to a specific task, but just some extra code I’ve implemented for logging and status. When we moved into our rental, we were given one of the large garage remote controls. But, especially in the summer, my wife and I mostly cycle, and these remotes are bulky and annoying. We bought one of the smaller remotes, but my wife and I are often out separately. So now we can open the garage via Wi-Fi.

- The ESP runs a basic web-server, just waiting for a request to open the garage door. I need to still install a sensor and feed this data back to the server. And if I’m doing that, I might as well monitor the temperature in the garage too…

Other Tasks

Beyond the local devices actively pushing data to the server, there are a number of tasks that get run for ad-hoc items.

- Outside Temperature

- Lacking an outside connected thermometer, I instead choose to just pull data from the weather api every 20min.

- Data plotting

- Instead of learning how to plot data on the fly in a web-friendly format. I chose the lazy route I already had experience with, which is using python’s Plotly library to generate an interactive html graph. And because doing this on the fly on a raspberry pi can be very slow, I just do it every few hours, and supply the last generated one when requested. This can be improved. (UPDATE: I installed grafana, see screenshot at the end of the post)

- NAS Wake/Sleep

- I have a NAS downstairs, it can be a bit noisy. I only ever need to use it at night, which is also when it needs to be on to perform internet backups. So I get this task to use WoL to start it up in the evening, and an ssh command to put it to sleep in the wee hours of the morning.

- Get external IP

- At some stage I started setting up cloudflare to redirect a domain to my homeserver. I never completed this, but I did write a script to monitor our external IP address and update me via e-mail whenever it changed.

- Strava

- Earlier this year, Strava added Squash to their list of supported activities. I’d done a lot of squash activities, that were listed as Workouts. So I went about setting the record straight, using the Strava API to update all my previous activities that matched the criteria to be Squash. With all that done, I extended it to start adding weather data to my outdoor activites and ensuring that all my future squash activities get listed as such (Garmin don’t support Squash, so my activity still gets sent to Strava as a Workout, and then the script updates it). So now I have a web-hosted server that Strava notifies whenever I (or other people) complete an activity. My raspberry pi polls this server occasionally and runs scripts locally to determine if anything needs to happen, and then update activities as necessary.

- Express Entry

- At one stage we had an application in for Canadian Express Entry. They released new results usually once a week, but not at fixed times, and occasionally more than once per week. So I set this task to scrape the site where they posted the results and e-mail whenever it got updated with the latest results. Sometime after it was no longer applicable to us, the website was updated to no longer provide the information with a standard http request. So I stopped running it.

I started on this project a long time ago, and would often not look at the code for months or years, then update it to do something I wanted. It certainly has a lot of shortcomings, a lot of opportunity for improvement, and would do well to better implement a lot of things I’d consider good programming practice these days, but were not top of my mind at the time. That being said, it works. The fact that I only have to do something to it when I want to make an improvement is evidence of this. My biggest ongoing concern is when the Raspberry Pi’s SD card is going to pack up :D

And then

Most of the code that runs on the Raspberry Pi is available in the below repo. I’m still updating it with some of the Strava integration stuff, but I need to do some more improvements first

UPDATE: I installed Grafana, so pretty: